In the copious free time I’ve had since my daughter was born a year ago (hi Emily!), I have been reading Sydney Lamb‘s work on what he calls Neurocognitive Linguistics. He started out in the 60’s with a relational network model of language that was quite different from the highly symbolic models others were using. Mostly ignored since then, the model turns out to reflect to a surprisingly high degree what the brain actually does when it processes language. It is starting to see a resurgence, particularly in the field of computational linguistics.

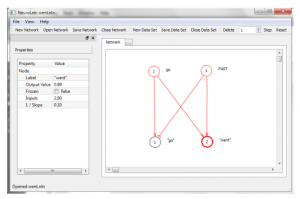

I have written the beginnings of a program to experiment with the model. The Neurocognitive Linguistics Lab allows you to graphically create relational networks and then simulate them in time to see how the neural activation and inhibition travels through the network. It has a handy data collection feature that allows you to save the data from your simulations in CSV format for further analysis.

Source and binaries are available at the project website, under the terms of the BSD License.

The program is written in C++ using the cross-platform Qt framework. It has a convenient plugin system for implementing new kinds of network entities. It simulates the neural net by means of an asynchronous automata network, which uses Qt’s parallel processing facilities to automatically make use of multiple cores.